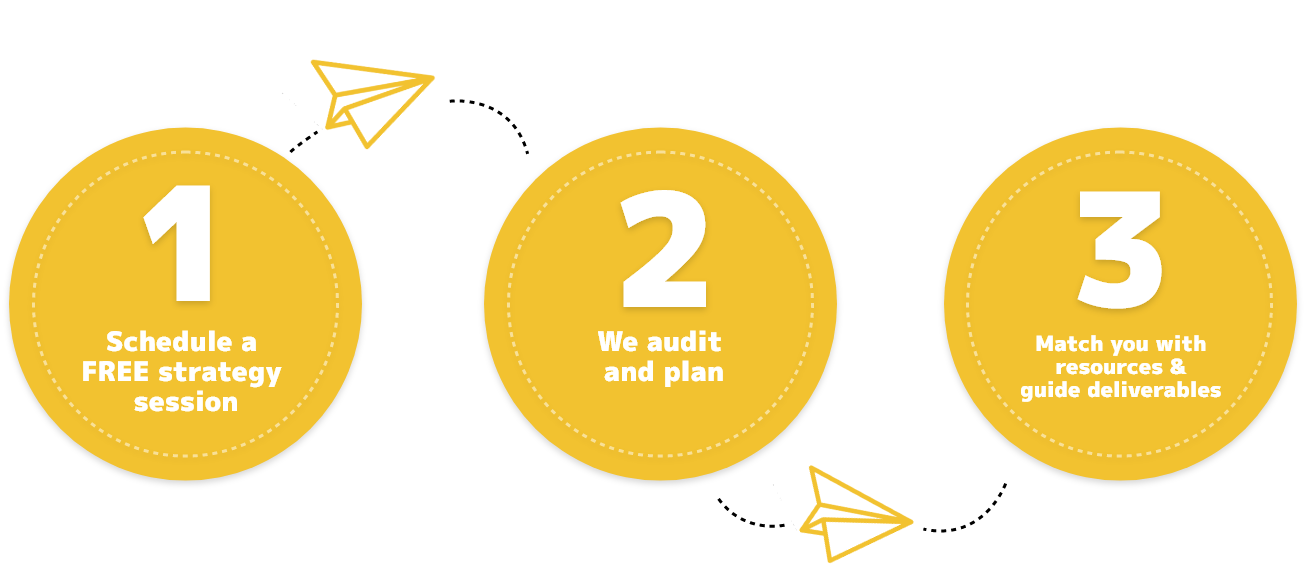

We are ready to step in to help at every step of the way. We are flexible enough to be your digital partner with a strong on-going relationship, or we can show you the correct path to get started and allow you and your team to take over once your strategy is in place.

Our marketing technology and digital design geeks are here to save the day. We can help you design and build robust architecture for your company’s or client’s specific needs. We can help with web design and development, integration and information architecture, wireframing, and journey mapping, agile project management.

We are experts in search engine optimization and digital content management. Everything from building the necessary infrastructure to support message delivery and audience targeting, to developing strategies and guiding deliverables. We will work with you to identify and install tactics that will increase web traffic and convert more visitors into paying, loyal customers.

If you are a marketer and you are not thinking about integrating voice and chat into your engagement strategy, then we need to talk. We help marketers plan and implement AI and machine learning into their strategies and campaigns. Our goal is to help teach computers to talk the way humans do so your customers can have natural conversations with your chatbots and have a good user experience.

We help your business stay up with the latest trends in social media by working with them to audit, plan, and guide the delivery of a social media strategy designed to connect you with your company or client with the right communities.

Web content receives the lion’s share of attention in the SEO world, but content encompasses much more.

What should a business do about social media? What about newsletters that convert at a high rate? All content needs a management platform once digital assets expand and take on a life of their own.

Digital Communications Group can train staff and set them up to perform like pros or provide everything in a full-service package.

“The Digital Communications Group is devoted to helping marketing teams and agencies find and leverage the digital and technology resources needed to operate in today’s digital-first culture.”

They provide a very professional but friendly attitude, which is excellent.

We are building the largest knowledgebase of digital strategy and marketing technology tools in the world. Join the martechKB community of experts in building a resource designed to help marketing teams and agencies find and leverage the resources they need.

Campaign Audits

Digital Focused Go-to-Market Strategies

Marketing Technology Audit

Roadmap and Architecture

TRUSTED BY

NEWS & INSIGHTS

Our technical experts are developing new ways of problem solving and our leaders are at the forefront of researching and writing new industry guidelines. Read our insights on changing regulations, industry trends and other technical topics.